ATD Blog

Running Learning Requires More Than Program Evaluation

Content

If you looked at your current measures today, how many help you run the L&D function, not just evaluate your programs?

If you looked at your current measures today, how many help you run the L&D function, not just evaluate your programs?

Mon Feb 09 2026

Content

Early in my career, I believed program evaluation was the beginning and end of the learning measurement story. Beyond basic activity counts like registrations or “butts in seats,” most of my L&D colleagues, and later many of my clients, focused almost entirely on post-program surveys or the occasional impact or ROI study.

Early in my career, I believed program evaluation was the beginning and end of the learning measurement story. Beyond basic activity counts like registrations or “butts in seats,” most of my L&D colleagues, and later many of my clients, focused almost entirely on post-program surveys or the occasional impact or ROI study.

Content DEFINITIONS (So We Speak the Same Language) DEFINITIONS (So We Speak the Same Language) |

Content Measurement: The process of collecting and interpreting information to understand the size, amount, or effect of something. In this post, I focus on two things we measure: learning programs and the L&D function. Measurement: The process of collecting and interpreting information to understand the size, amount, or effect of something. In this post, I focus on two things we measure: learning programs and the L&D function. |

Content Program evaluation: This measures the effectiveness, efficiency, and impact of a specific learning solution. It is one part of measurement. Program evaluation: This measures the effectiveness, efficiency, and impact of a specific learning solution. It is one part of measurement. |

Content

That perspective did not come out of nowhere. Everything around me reinforced the same message: program evaluation equals L&D measurement . Over time, I came to see the consequence of that framing. L&D has built deep expertise in program evaluation, but it has underdeveloped measurement of the L&D function. As a result, L&D measures its programs fairly well but undermeasures the function itself, limiting its ability to reliably serve stakeholders.

That perspective did not come out of nowhere. Everything around me reinforced the same message: program evaluation equals L&D measurement. Over time, I came to see the consequence of that framing. L&D has built deep expertise in program evaluation, but it has underdeveloped measurement of the L&D function. As a result, L&D measures its programs fairly well but undermeasures the function itself, limiting its ability to reliably serve stakeholders.

How the Profession Reinforced a Narrow View of Measurement

Content

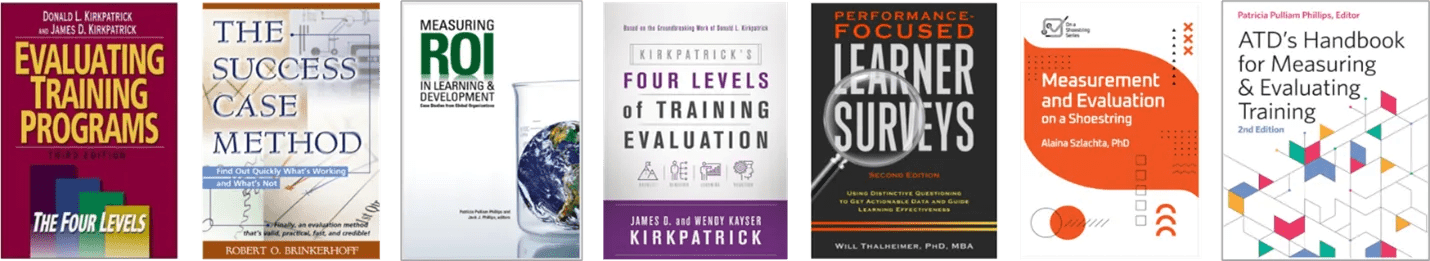

The profession has built significant capability in program evaluation. It has also framed “measurement” in ways that keep the focus anchored on programs.

The profession has built significant capability in program evaluation. It has also framed “measurement” in ways that keep the focus anchored on programs.

Content

Figure 1: Popular Books on Program Evaluation

Figure 1: Popular Books on Program Evaluation

Content

The ATD capability model describes a broad L&D function that spans learning sciences, instructional design, delivery, technology application, knowledge management, coaching, and leadership development. Yet when the model explicitly names measurement, it largely does so through a single lens: evaluating impact .

The ATD capability model describes a broad L&D function that spans learning sciences, instructional design, delivery, technology application, knowledge management, coaching, and leadership development. Yet when the model explicitly names measurement, it largely does so through a single lens: evaluating impact.

Content

The field’s literature reinforces the same message. Books, courses, webinars, and conference sessions train practitioners to think about measurement primarily through the lens of programs. This emphasis has increased rigor in evaluation but also sends a clear signal about what measurement should support. Measurement is program evaluation . Operational performance, functional efficiency, and system readiness sit largely outside the formal measurement conversation.

The field’s literature reinforces the same message. Books, courses, webinars, and conference sessions train practitioners to think about measurement primarily through the lens of programs. This emphasis has increased rigor in evaluation but also sends a clear signal about what measurement should support. Measurement is program evaluation. Operational performance, functional efficiency, and system readiness sit largely outside the formal measurement conversation.

Program Evaluation and Measurement Serve Different Purposes

Content

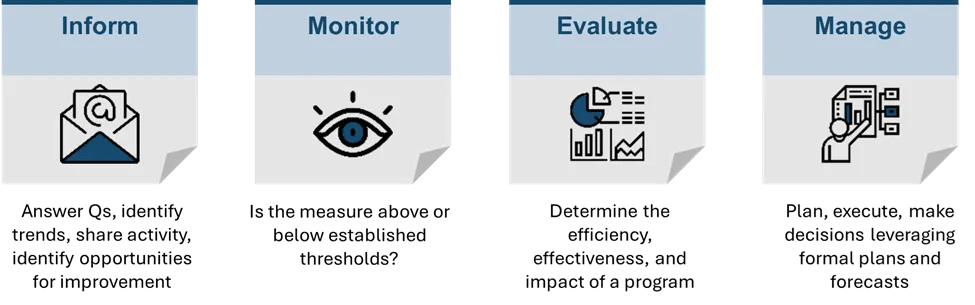

Measurement has multiple roles in L&D: to inform, monitor, evaluate, and manage.

Measurement has multiple roles in L&D: to inform, monitor, evaluate, and manage.

Content

Figure 2: The Four Reasons to Measure

Figure 2: The Four Reasons to Measure

Content

Program evaluation sits primarily in Evaluate. It plays an essential role, but it represents only one part of the measurement system. To run learning well, leaders also need measures that inform decisions, monitor performance, and manage the L&D operation over time.

Program evaluation sits primarily in Evaluate. It plays an essential role, but it represents only one part of the measurement system. To run learning well, leaders also need measures that inform decisions, monitor performance, and manage the L&D operation over time.

Content

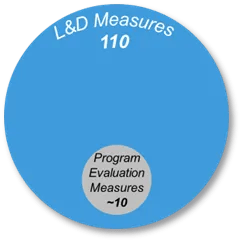

Figure 3: 10 Program Measures vs. 110 L&D Measures

Figure 3: 10 Program Measures vs. 110 L&D Measures

Content

Program evaluation also relies on a relatively small and well-established set of measures. Running the function requires tapping into the broader suite of 100 L&D measures.

Program evaluation also relies on a relatively small and well-established set of measures. Running the function requires tapping into the broader suite of 100 L&D measures.

What L&D Should Measure at the Organizational Level?

Content

Once leaders move beyond program-only measurement, the focus shifts to how the L&D function performs as a system. Organizational-level measures help leaders answer questions such as:

Once leaders move beyond program-only measurement, the focus shifts to how the L&D function performs as a system. Organizational-level measures help leaders answer questions such as:

Content

Are we meeting the needs of the business? (stakeholder satisfaction, demand fulfilled versus requested, time-to-support for priority initiatives, alignment ratings for top initiatives)

Are we meeting the needs of the business? (stakeholder satisfaction, demand fulfilled versus requested, time-to-support for priority initiatives, alignment ratings for top initiatives)

Content

Can we deliver work reliably and on time? ( on-time delivery rate, cycle time from intake to launch, service level agreement attainment)

Can we deliver work reliably and on time? (on-time delivery rate, cycle time from intake to launch, service level agreement attainment)

Content

Where do capacity constraints limit our ability to respond? (instructor utilization, backlog volume, throughput trends)

Where do capacity constraints limit our ability to respond? (instructor utilization, backlog volume, throughput trends)

Content

Are our technology platforms enabling learning or creating friction? ( platform adoption and active use, support tickets per 100 users, time-to-resolve issues)

Are our technology platforms enabling learning or creating friction? (platform adoption and active use, support tickets per 100 users, time-to-resolve issues)

Content

Are vendors delivering quality, value, and consistency? (on-time deliverables, quality ratings, cost variance versus quoted price, renewal or escalation rate)

Are vendors delivering quality, value, and consistency? (on-time deliverables, quality ratings, cost variance versus quoted price, renewal or escalation rate)

Content

Are resources aligned to the highest-value work? ( % effort on top priorities, spend by portfolio versus business priorities, strategic versus ad hoc work ratio, cost by work type)

Are resources aligned to the highest-value work? (% effort on top priorities, spend by portfolio versus business priorities, strategic versus ad hoc work ratio, cost by work type)

Content

Each question includes sample measures to illustrate what “organizational-level measurement” looks like in practice. The goal is not to track everything. The goal is to maintain a small, consistent set of operational indicators across delivery reliability, capacity, cost, technology health, and vendor performance. These are not vanity metrics; they are enabling metrics. They allow leaders to anticipate problems, make informed trade-offs, and address program quality before issues surface in evaluation results.

Each question includes sample measures to illustrate what “organizational-level measurement” looks like in practice. The goal is not to track everything. The goal is to maintain a small, consistent set of operational indicators across delivery reliability, capacity, cost, technology health, and vendor performance. These are not vanity metrics; they are enabling metrics. They allow leaders to anticipate problems, make informed trade-offs, and address program quality before issues surface in evaluation results.

How Organizational Measurement Supports Robust Programming

Content

Program effectiveness and operational efficiency are not competing priorities. Programs perform through systems. Systems require measurement.

Program effectiveness and operational efficiency are not competing priorities. Programs perform through systems. Systems require measurement.

Content

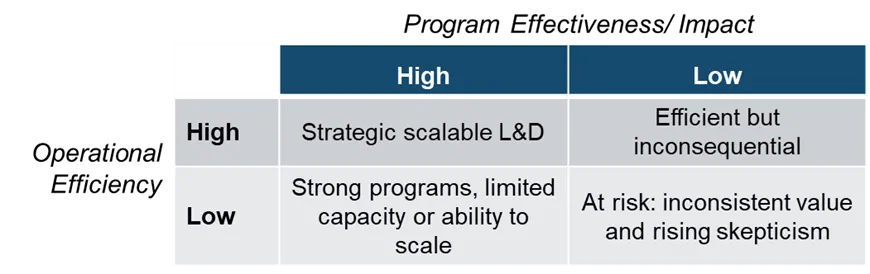

Strong programs without operational capability strain to scale. Efficient operations without effective programs deliver little value. The interaction between the two determines whether L&D can consistently meet stakeholder expectations.

Strong programs without operational capability strain to scale. Efficient operations without effective programs deliver little value. The interaction between the two determines whether L&D can consistently meet stakeholder expectations.

Content

Figure 4: Importance of Program and Operational Measures

Figure 4: Importance of Program and Operational Measures

Content

Program evaluation strengthens decisions about learning design and impact. Organizational measures strengthen decisions about capacity, delivery, and trade-offs. With both, L&D can ensure program quality while improving predictability and scale.

Program evaluation strengthens decisions about learning design and impact. Organizational measures strengthen decisions about capacity, delivery, and trade-offs. With both, L&D can ensure program quality while improving predictability and scale.

Manage the Function, Not Just Programs

Content

Sustainable measurement requires L&D leaders to broaden their thinking about measurement and what they expect it to support. Program evaluation should remain rigorous and visible. At the same time, leaders need organizational-level measures to inform, monitor, and manage the learning function itself.

Sustainable measurement requires L&D leaders to broaden their thinking about measurement and what they expect it to support. Program evaluation should remain rigorous and visible. At the same time, leaders need organizational-level measures to inform, monitor, and manage the learning function itself.

Content

When L&D treats measurement as a management discipline, it gains the visibility needed to run the operation well and support robust programming. Running learning like a business means leaders can forecast demand, manage capacity, make trade-offs explicit, and deliver reliably, not just report results after the fact.

When L&D treats measurement as a management discipline, it gains the visibility needed to run the operation well and support robust programming. Running learning like a business means leaders can forecast demand, manage capacity, make trade-offs explicit, and deliver reliably, not just report results after the fact.

Content

If you looked at your current measures today, how many help you run the L&D function, not just evaluate your programs?

If you looked at your current measures today, how many help you run the L&D function, not just evaluate your programs?