ATD Blog

A Better Framework for L&D Measurement and Analytics

Mon Jan 11 2021

Too many L&D practitioners struggle to create a robust measurement and reporting strategy that meets their stakeholders’ needs. They don’t know what measures to include, are confused about how to define them, and have no guidance about how to report them. Most practitioners don’t even know where to start. It shouldn’t be this hard.

Our new book, Measurement Demystified: Creating Your L&D Measurement, Analytics, and Reporting Strategy, was written to address this important need. We want to demystify the process by providing an easy framework based on Talent Development Reporting principles (TDRp) for classifying, selecting, and reporting the most appropriate measures to meet your needs. TDRp is an industry-led effort begun in 2010 to bring standards and best practices to L&D in particular and HR in general. TDRp has evolved and grown during the last 10 years into a holistic and comprehensive framework that provides guidance on all aspects of creating a measurement and reporting strategy.

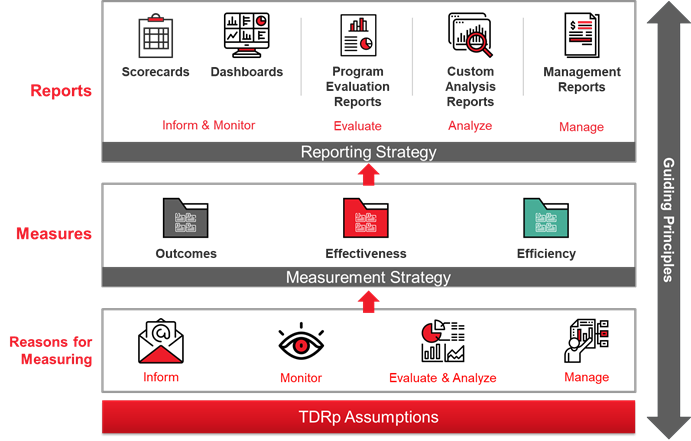

We believe the starting point for a good strategy is with the users and the reasons to measure. We also believe a framework should be simple and easy to use, so TDRp groups all the possible reasons to measure into four categories. This makes communication about measures much easier because we have a common language and classification scheme, much like accountants have in generally accepted accounting procedures (GAAP). The four overarching reasons are to inform, monitor, evaluate and analyze, and manage.

Inform means the measures are used to answer questions and identify trends. Monitor implies that the value of the measure will be compared to a threshold to determine if the value is within an acceptable range. Evaluate and analyze refers to the use of measures to determine if a program is efficient and effective or whether there is a relationship among key measures like the amount of training and employee engagement scores. Lastly, manage describes the use of measures to set plans, reviews monthly progress against plans, and takes appropriate action to come as close as possible to delivering the promised results by year-end. Upfront discussions with users should identify their reason for measuring, which will be important when we select the right report for their measures.

The next element in the framework is a classification scheme for the types of measures. Again, our goal is to make it simple to remember and simple to use. We have more than 170 measures just for L&D, so we need a way of grouping them that will be helpful. We suggest efficiency, effectiveness, and outcome. Efficiency measures, “How much?” with data like the number of participants or costs. Effectiveness measures, “How well?” with data about participant reaction or application of learning. Outcome measures answer, “What is the impact of the training?” through data that quantifies the impact on organizational goals such as financial, customer, operational, or people. The classification framework makes it easier to provide guidance in selecting the right measures.

All programs should have at least one efficiency and one effectiveness measure. More often, a single program will have several of each. If the program supports a key organization goal like increasing sales or employee engagement, then it should also have an outcome measure like impact on sales. TDRp provides guidance for measurement selection based on the type of program, and the book includes definitions, formulas, and guidance on over 120 measures, including those which are benchmarked by ATD and recommended by ISO.

Once you select your measures, you need to decide how to share them. TDRp again provides guidance to simplify your decision making. We start with a framework of five types of reports: scorecards, dashboards, program evaluation reports, custom analysis reports, and management reports. Each type of report maps to the reason for measuring; once you have had the upfront discussion with the user and understand their needs, TDRp indicates which report best meets that need.

A scorecard is simply a table with rows and columns in which the rows are the measures and the columns are the time periods (such as months or quarters). Scorecards work well if you want to see detailed data to answer questions about the number of participants by month and if there is trend in the data. In contrast to a scorecard, a dashboard contains summary data and often has visual components like graphs or bar charts. A dashboard may also be interactive, allowing you to drill down into the data. Scorecards and dashboards are used when the reason for measuring is to inform. Both may also be used when the reason is to monitor, but in this case, you would also include color-coding or threshold levels to alert you that the measure is within (or outside) an acceptable range.

A program evaluation report is used when the reason to measure is to evaluate a program for its efficiency and effectiveness. You typically generate a program evaluation report at the conclusion of the program and brief the stakeholders. Similarly, you use a custom analysis report to brief stakeholders on the results of a statistical analysis of the relationships among measures (for example, the extent to which training impacts retention or engagement). The last type of report is the management report, which you should use when the reason to measure is to manage. Management reports require setting specific, measurable plans (targets) for key measures and generating monthly reports to compare year-to-date results against plan and to compare forecast for year-end against plan. These reports have the same look and feel as reports used by sales and manufacturing departments.

There are three varieties of management reports:

The program report provides detail on learning programs in support of a single organization goal such as increasing sales.

The operations report shows data aggregated across all courses for those measures the CLO is managing for improvement.

The summary report shows how learning is aligned to the CEO’s goals as well as other important organization needs and includes the key measures for each. This report is shared with the CEO and governing bodies to show the alignment and impact of L&D.

Last, we share all the elements of a measurement and reporting strategy with step-by-step guidance to make it easy for you to create your own. We also include a sample strategy in the appendix you can use as a template. Our hope is that the TDRp framework combined with the detailed guidance and lots of examples will take the mystery out of measurement and make it easy for you to create your own measurement and reporting strategy.