ATD Blog

Evidence-Based Survey Design: “Strongly Agree” on the Left or Right Side of the Likert Scale?

Wed Sep 18 2019

Content

How many survey questionnaires have you completed during your career? Probably quite a few. Some of the survey items you completed likely used the bipolar Likert Scale, with agreement options on one side, disagreement options on the other side, and neutral in the middle. You may also have designed your own survey questionnaires using the Likert Scale or similar styles—for example, satisfaction options on one side and dissatisfaction option on the other.

How many survey questionnaires have you completed during your career? Probably quite a few. Some of the survey items you completed likely used the bipolar Likert Scale, with agreement options on one side, disagreement options on the other side, and neutral in the middle. You may also have designed your own survey questionnaires using the Likert Scale or similar styles—for example, satisfaction options on one side and dissatisfaction option on the other.

Content

When using these scales, there are two ways of listing response options—in ascending or descending order—depending upon whether you list the most positive response option on the left or right side of the response scale. In the following survey item, for example, which order of the Likert Scale options would you use? Does it matter?

When using these scales, there are two ways of listing response options—in ascending or descending order—depending upon whether you list the most positive response option on the left or right side of the response scale. In the following survey item, for example, which order of the Likert Scale options would you use? Does it matter?

Content

I feel valued at work.

I feel valued at work.

Content

○ Strongly agree ○ Agree ○ Neutral ○ Disagree ○ Strongly disagree (horizontal descending order)

○ Strongly agree ○ Agree ○ Neutral ○ Disagree ○ Strongly disagree (horizontal descending order)

Content

I feel valued at work.

I feel valued at work.

Content

○ Strongly disagree ○ Disagree ○ Neutral ○ Agree ○ Strongly agree (horizontal ascending order)

○ Strongly disagree ○ Disagree ○ Neutral ○ Agree ○ Strongly agree (horizontal ascending order)

Content

Research has shown that whether you use a descending or ascending scale, the order of response options can make a difference in the survey results. Before we talk about the effects of these, we need to understand some aspects of human psychology and behavior.

Research has shown that whether you use a descending or ascending scale, the order of response options can make a difference in the survey results. Before we talk about the effects of these, we need to understand some aspects of human psychology and behavior.

Content

Think about this—you’re ordering food at a restaurant, and the server is telling you several available salad dressing or wine options. You listen and, despite all the choices, find yourself selecting one of the last ones listed. This is called a recency effect — people tend to select the options that they see or hear at the end of the response option list . The recency effect tends to happen when options are presented orally.

Think about this—you’re ordering food at a restaurant, and the server is telling you several available salad dressing or wine options. You listen and, despite all the choices, find yourself selecting one of the last ones listed. This is called a recency effect—people tend to select the options that they see or hear at the end of the response option list. The recency effect tends to happen when options are presented orally.

Content

When the response options are written in self-administered survey questionnaires, a few outcomes can occur. The primacy effect happens when survey respondents select the options that are presented at the beginning of the response option list listed horizontally (as shown above). Similarly, when the text is written from left to right, survey respondents tend to select what’s written on the left side of the response scale—what’s known as left-side selection bias . Survey respondents also tend to agree rather than disagree with the statement provided—an outcome known as acquiescence bias or yea-saying bias . This choice is also related to respondents’ desires to select options they think are more socially acceptable (often the positive ones) instead of expressing their true thoughts. Social-desirability bias plays into this scenario. Survey respondents also tend to select options that are simply satisfactory (good enough)— satisficing behavior —to minimize their cognitive effort in completing the survey.

When the response options are written in self-administered survey questionnaires, a few outcomes can occur. The primacy effect happens when survey respondents select the options that are presented at the beginning of the response option list listed horizontally (as shown above). Similarly, when the text is written from left to right, survey respondents tend to select what’s written on the left side of the response scale—what’s known as left-side selection bias. Survey respondents also tend to agree rather than disagree with the statement provided—an outcome known as acquiescence bias or yea-saying bias. This choice is also related to respondents’ desires to select options they think are more socially acceptable (often the positive ones) instead of expressing their true thoughts. Social-desirability bias plays into this scenario. Survey respondents also tend to select options that are simply satisfactory (good enough)— satisficing behavior—to minimize their cognitive effort in completing the survey.

Content

When given written self-administered survey items with response options presented horizontally, survey respondents usually select among the ones that:

When given written self-administered survey items with response options presented horizontally, survey respondents usually select among the ones that:

Content

they read first on the left side (primacy effect and left-side selection bias)

they read first on the left side (primacy effect and left-side selection bias)

Content

indicate agreement, which is more socially acceptable and does not require too much thinking to decide (acquiescence bias, social-desirability bias, and satisficing).

indicate agreement, which is more socially acceptable and does not require too much thinking to decide (acquiescence bias, social-desirability bias, and satisficing).

Content

So, what would happen when you use the Likert or a Likert-type scale ascending or descending response options? Research has shown mixed results:

So, what would happen when you use the Likert or a Likert-type scale ascending or descending response options? Research has shown mixed results:

Content

1. Descending-ordered response options result in statistically significantly more positive ratings.

1. Descending-ordered response options result in statistically significantly more positive ratings.

Content

2. Different response orders do not make a statistically significant difference in survey ratings.

2. Different response orders do not make a statistically significant difference in survey ratings.

Content

Between the two, however, the first type of results seem to be more frequent. Why could that be?

Between the two, however, the first type of results seem to be more frequent. Why could that be?

Content

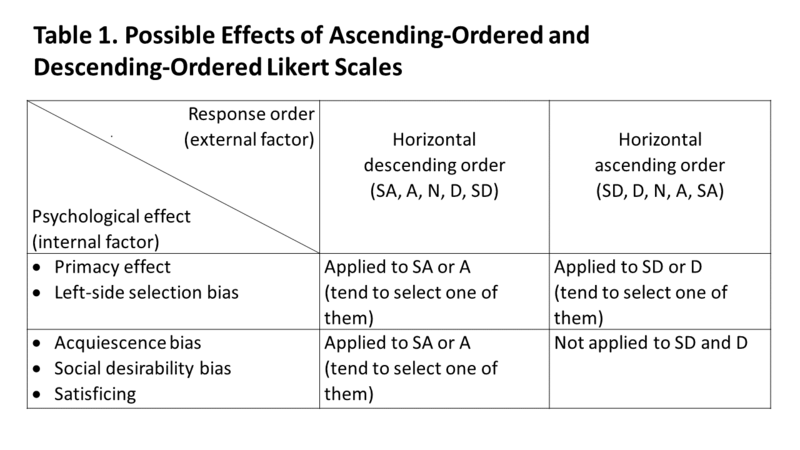

As shown in Table 1, the left-side selection bias may apply to ascending- and descending-ordered response scales. When acquiescence bias, social desirability bias, and satisficing behavior are applied to descending-ordered responses scales, you likely see more positive average survey ratings.

As shown in Table 1, the left-side selection bias may apply to ascending- and descending-ordered response scales. When acquiescence bias, social desirability bias, and satisficing behavior are applied to descending-ordered responses scales, you likely see more positive average survey ratings.

Content

With that in mind, also think about this—if you were to develop survey items with an 11-point scale to rate, for example, clarity in writing, which one of the following response scales should you be concerned about for inflated average scores due to response order effects?

With that in mind, also think about this—if you were to develop survey items with an 11-point scale to rate, for example, clarity in writing, which one of the following response scales should you be concerned about for inflated average scores due to response order effects?

Content

1. Clear 10 9 8 7 6 5 4 3 2 1 0 Unclear

1. Clear 10 9 8 7 6 5 4 3 2 1 0 Unclear

Content

2. Clear 0 1 2 3 4 5 6 7 8 9 10 Unclear

2. Clear 0 1 2 3 4 5 6 7 8 9 10 Unclear

Content

3. Unclear 10 9 8 7 6 5 4 3 2 1 0 Clear

3. Unclear 10 9 8 7 6 5 4 3 2 1 0 Clear

Content

4. Unclear 0 1 2 3 4 5 6 7 8 9 10 Clear

4. Unclear 0 1 2 3 4 5 6 7 8 9 10 Clear

Content

Research found that the first one that puts positive wording (“Clear”) and the highest numerical value (“10”) on the left side resulted in the highest average score.

Research found that the first one that puts positive wording (“Clear”) and the highest numerical value (“10”) on the left side resulted in the highest average score.

Content

Some research , though, showed no statistically significant differences between the ascending- and descending-ordered response scales on the average survey ratings.

Some research, though, showed no statistically significant differences between the ascending- and descending-ordered response scales on the average survey ratings.

Content

Also, what if you present Likert Scale options (as shown below) or other Likert-type scale response options vertically ? Will you see top selection bias (similar to left-side selection bias)?

Also, what if you present Likert Scale options (as shown below) or other Likert-type scale response options vertically? Will you see top selection bias (similar to left-side selection bias)?

Content | Content |

Content I feel valued at work. ○ Strongly agree ○ Agree ○ Neutral ○ Disagree ○ Strongly disagree (vertical descending order) I feel valued at work. ○ Strongly agree ○ Agree ○ Neutral ○ Disagree ○ Strongly disagree (vertical descending order) | Content I feel valued at work. ○ Strongly disagree ○ Disagree ○ Neutral ○ Agree ○ Strongly agree (vertical ascending order) I feel valued at work. ○ Strongly disagree ○ Disagree ○ Neutral ○ Agree ○ Strongly agree (vertical ascending order) |

Content Again, research shows mixed results. Some studies have Again, research shows mixed results. Some studies have Content not clearly shown Content top selection bias from vertically presented response options, but top selection bias from vertically presented response options, but Content some did Content . . |

Content

What does this all mean to you as a practitioner when designing your survey items with Likert-type response scales? You intend to use your survey data as part of your evidence-based practice; therefore, it is important that you collect data that is as accurate as possible. Now that you know descending-ordered response scales can give you inflated, inaccurate data, here are some suggestions:

What does this all mean to you as a practitioner when designing your survey items with Likert-type response scales? You intend to use your survey data as part of your evidence-based practice; therefore, it is important that you collect data that is as accurate as possible. Now that you know descending-ordered response scales can give you inflated, inaccurate data, here are some suggestions:

Content

If you suspect that your survey items may be prone to showing inflated data when using descending-ordered response scales, use ascending-ordered response scales.

If you suspect that your survey items may be prone to showing inflated data when using descending-ordered response scales, use ascending-ordered response scales.

Content

Provide clear directions about the order of response options because some survey respondents may use the “first-is-best” heuristic method and assume the far-left option is the most positive option without carefully reading it.

Provide clear directions about the order of response options because some survey respondents may use the “first-is-best” heuristic method and assume the far-left option is the most positive option without carefully reading it.

Content

If you choose to use descending-ordered response scales, you may want to make your survey questionnaire short because fatigued survey respondents (due to lengthy survey items) are more prone to response-order effects.

If you choose to use descending-ordered response scales, you may want to make your survey questionnaire short because fatigued survey respondents (due to lengthy survey items) are more prone to response-order effects.