ATD Blog

Taking the Fear Out of Training Evaluation

Wed Feb 20 2019

Content

Aaron read the email from the division vice president: In 2019 and forward, all division-wide training initiatives will be preceded by a business case and fully evaluated to show their impact.

Aaron read the email from the division vice president: In 2019 and forward, all division-wide training initiatives will be preceded by a business case and fully evaluated to show their impact.

Content

"How do I do that?” Aaron thought.

"How do I do that?” Aaron thought.

Content

You may be feeling the same uncertainty about training evaluation and showing the value that training brings to your organization. Here are a few tips to build your knowledge and confidence. Define the Desired Results If, like Aaron, your leaders want a business case before they approve new training initiatives, don’t worry. It means they recognize how critical training is to achieving the organization’s goals. Try to see things from their perspective. Training teams often jump to solutions before having a full understanding of the situation or of the results business stakeholders want to achieve.

You may be feeling the same uncertainty about training evaluation and showing the value that training brings to your organization. Here are a few tips to build your knowledge and confidence.Define the Desired ResultsIf, like Aaron, your leaders want a business case before they approve new training initiatives, don’t worry. It means they recognize how critical training is to achieving the organization’s goals. Try to see things from their perspective. Training teams often jump to solutions before having a full understanding of the situation or of the results business stakeholders want to achieve.

Content

You need to understand the underlying problem that generated the training request so you can recommend a course of action that will solve it. Keep in mind that the ultimate purpose of training is to measurably contribute to key organizational results by improving on-the-job performance.

You need to understand the underlying problem that generated the training request so you can recommend a course of action that will solve it. Keep in mind that the ultimate purpose of training is to measurably contribute to key organizational results by improving on-the-job performance.

Content

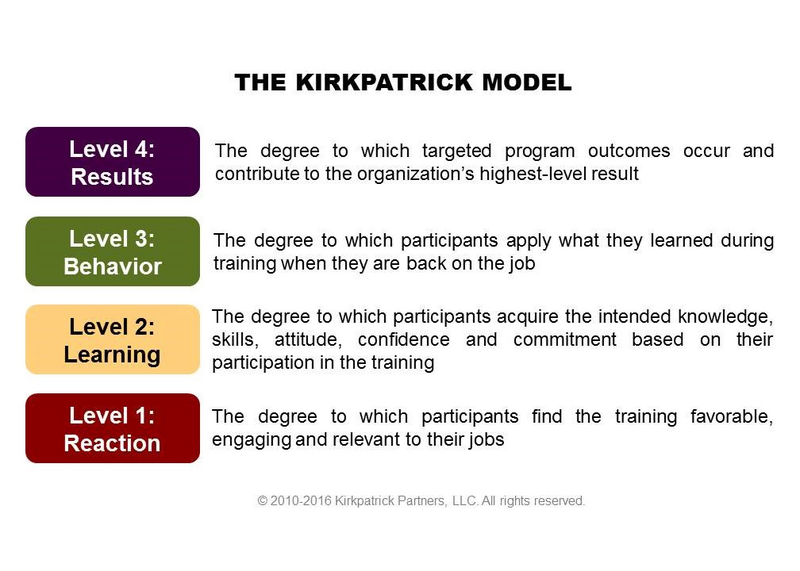

Kirkpatrick's Four Levels provide a simple framework to illustrate this: Gerald Jones, CPLP and performance consultant with Booz Allen Hamilton, asks stakeholders questions such as these to discover program needs:

Kirkpatrick's Four Levels provide a simple framework to illustrate this: Gerald Jones, CPLP and performance consultant with Booz Allen Hamilton, asks stakeholders questions such as these to discover program needs:

Content

In a perfect world, describe how [insert problem area] would function. How would the business be affected? How does this differ from the outcomes you’re seeing today?

In a perfect world, describe how [insert problem area] would function. How would the business be affected? How does this differ from the outcomes you’re seeing today?

Content

Which behaviors do you see contributing to today’s poor outcomes? Which specific behaviors do you want employees to perform as a result of completing this training?

Which behaviors do you see contributing to today’s poor outcomes? Which specific behaviors do you want employees to perform as a result of completing this training?

Content

What will we need to do to ensure that training graduates do what they are supposed to do after training? (You might also ask trusted line managers and supervisors these same questions.)

What will we need to do to ensure that training graduates do what they are supposed to do after training? (You might also ask trusted line managers and supervisors these same questions.)

Content

Organizational leaders will be most interested in data from Levels 4 and 3, so focus your evaluation efforts on these areas. You should also capture some feedback about how much participants learned and how they felt about the program (Levels 2 and 1). Draft a short, focused list of key information that will be most useful to you and use it to guide the evaluation you conduct. Build Evaluation Tools As You Build the Program Once you are clear on the information you need at each of the four levels, build your evaluation tools as you build your training program. The best evidence of training success is a combination of quantitative numeric data and the qualitative stories that explain the data and bring it to life.

Organizational leaders will be most interested in data from Levels 4 and 3, so focus your evaluation efforts on these areas. You should also capture some feedback about how much participants learned and how they felt about the program (Levels 2 and 1). Draft a short, focused list of key information that will be most useful to you and use it to guide the evaluation you conduct.Build Evaluation Tools As You Build the ProgramOnce you are clear on the information you need at each of the four levels, build your evaluation tools as you build your training program. The best evidence of training success is a combination of quantitative numeric data and the qualitative stories that explain the data and bring it to life.

Content

The tools and data for Level 4 evaluation generally already exist. Whichever failing metrics contributed to your original business case are the same your stakeholders will likely use to measure program success. Try to gain access to sales, expenditure, customer retention, or other relevant reports.

The tools and data for Level 4 evaluation generally already exist. Whichever failing metrics contributed to your original business case are the same your stakeholders will likely use to measure program success. Try to gain access to sales, expenditure, customer retention, or other relevant reports.

Content

Similarly, data to evaluate Level 3 behavior may be found in production reports, customer service logs, or other such documents. Design a few survey or interview questions to obtain the stories behind the numbers. Build tools into your training that can become job aids when graduates are back on the job; for example, create checklists for multi-step processes or reference sheets for commonly used tools.

Similarly, data to evaluate Level 3 behavior may be found in production reports, customer service logs, or other such documents. Design a few survey or interview questions to obtain the stories behind the numbers. Build tools into your training that can become job aids when graduates are back on the job; for example, create checklists for multi-step processes or reference sheets for commonly used tools.

Content

To the extent possible, build touchpoints for evaluating Levels 2 and 1 right into your instructional design. Formative evaluation techniques, such as pulse checks, group exercises, teach-backs, and competitive games, provide great insights that would otherwise go unnoticed.

To the extent possible, build touchpoints for evaluating Levels 2 and 1 right into your instructional design. Formative evaluation techniques, such as pulse checks, group exercises, teach-backs, and competitive games, provide great insights that would otherwise go unnoticed.

Content

Just a few questions on a post-program survey are usually sufficient to gather data about the level of participant satisfaction. Evaluate Data and Adjust the Plan Along the Way To maximize training impact, use formative evaluation data to make on-the-spot changes during training. For example, a great tool Gerald has used to help participants see the relevance of training content to their jobs is the impromptu brainstorming session. Conduct a table exercise to brainstorm ways participants could apply the training to their jobs, and have each team share and discuss their results.

Just a few questions on a post-program survey are usually sufficient to gather data about the level of participant satisfaction.Evaluate Data and Adjust the Plan Along the WayTo maximize training impact, use formative evaluation data to make on-the-spot changes during training. For example, a great tool Gerald has used to help participants see the relevance of training content to their jobs is the impromptu brainstorming session. Conduct a table exercise to brainstorm ways participants could apply the training to their jobs, and have each team share and discuss their results.

Content

Review post-training evaluation data for feedback that might indicate challenges in on-the-job application. Report any issues to managers and stakeholders, and ask for their assistance in resolving them.

Review post-training evaluation data for feedback that might indicate challenges in on-the-job application. Report any issues to managers and stakeholders, and ask for their assistance in resolving them.

Content

Encourage graduates to talk about their successes (big and small) and how they are overcoming challenges. Team meetings, company newsletters, intranet pages, and other formal or informal media outlets provide excellent opportunities to share these wins. Take the First Step The most important thing you can do is take the first steps:

Encourage graduates to talk about their successes (big and small) and how they are overcoming challenges. Team meetings, company newsletters, intranet pages, and other formal or informal media outlets provide excellent opportunities to share these wins.Take the First StepThe most important thing you can do is take the first steps:

Content

Select a mission-critical program.

Select a mission-critical program.

Content

Find a program champion.

Find a program champion.

Content

Implement these ideas.

Implement these ideas.

Content

Document what works and doesn’t work in your organization.

Document what works and doesn’t work in your organization.

Content

Over time, you will form an organizational evaluation strategy that aligns with your leaders’ desired business results. Before you know it, all important initiatives will have ongoing evaluation as part of the plan—and your training team’s impact will be undeniable.

Over time, you will form an organizational evaluation strategy that aligns with your leaders’ desired business results. Before you know it, all important initiatives will have ongoing evaluation as part of the plan—and your training team’s impact will be undeniable.